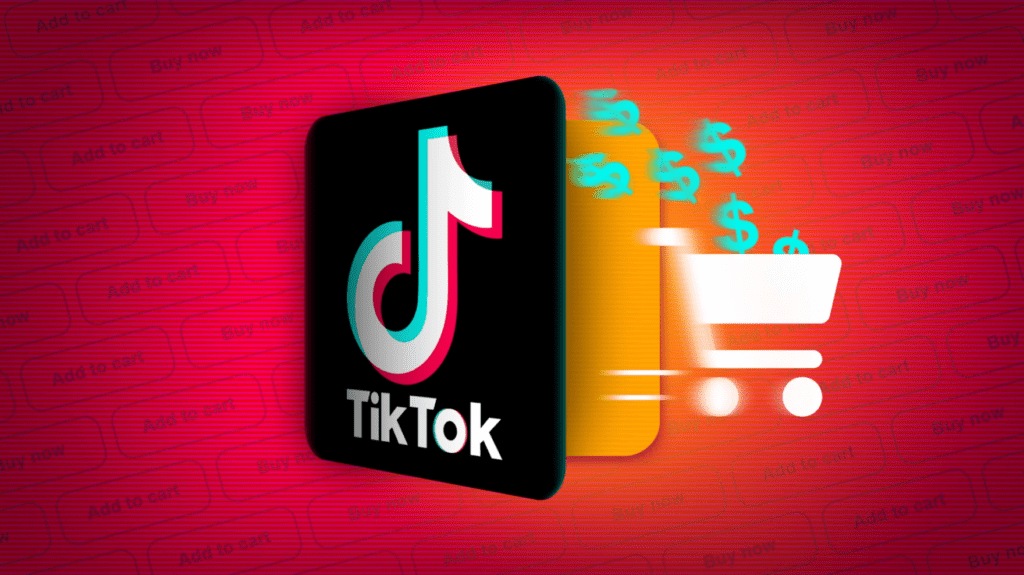

TikTok Cuts Off Employees

ByteDance, the parent company of TikTok, is laying off hundreds of employees, mainly in Malaysia, as the social media giant turns increasingly to artificial intelligence (AI) for content moderation. According to a report from Reuters, while the company has not specified the exact number of layoffs, it is estimated that fewer than 500 employees are affected by these changes.

This move is part of TikTok’s broader strategy to enhance its content moderation capabilities and streamline its global operations. The company has been balancing the use of AI technology and human moderators to review the vast amount of content shared on the platform daily. The shift toward AI is seen as a more efficient way to handle content moderation, though human oversight is still necessary for complex or nuanced decisions.

The Role of AI in Content Moderation

Content moderation is a critical function for platforms like TikTok, where millions of users upload videos every day. With this volume of content, ensuring that harmful or inappropriate material is removed quickly and efficiently is a constant challenge. TikTok, like other social media platforms, uses a combination of AI and human moderators to tackle this issue.

- Automated Detection: AI systems are programmed to automatically detect and remove content that violates TikTok’s community guidelines. This includes content related to violence, hate speech, and explicit material. AI tools are fast and can process massive amounts of data in real-time, making them crucial for managing TikTok’s global user base.

- Human Moderation: While AI can handle most of the straightforward cases, human moderators are still needed to assess content that requires more contextual understanding. For instance, AI might struggle with satire, political speech, or cultural nuances that could be misinterpreted by automated systems. Therefore, human moderators review content flagged by AI for further analysis before deciding whether to remove it.

The increasing reliance on AI for content moderation is likely one of the factors driving the job cuts in Malaysia and other locations. As AI becomes more sophisticated, fewer human moderators are required for the initial stages of content review. However, TikTok has not yet fully replaced its human moderation teams, as these employees are still vital for handling complex cases.

TikTok has stated that the layoffs are part of its effort to optimize its global operating model, particularly in content moderation. The company is restructuring to adapt to the changing needs of the platform as it scales and integrates more advanced AI solutions into its systems. While it did not specify which departments the layoffs are affecting, it is likely that a significant portion of those laid off were involved in content moderation, where AI is playing an increasingly central role.

FOLLOW OUR WHATSAPP CHANNEL FOR MORE UPDATES

TikTok has not yet responded to requests for additional comments from TechCrunch and other media outlets regarding these recent layoffs. However, the company did confirm to Reuters that less than 500 employees were affected by the cuts, mainly in Malaysia.

Previous Job Cuts at TikTok

This is not the first time TikTok has made cuts to its workforce. Earlier this year, the company initiated several rounds of layoffs across various departments and regions. In April, over 250 employees in Ireland were let go. This was followed by a reduction of around 1,000 employees in May, primarily affecting TikTok’s operations and marketing teams. Additionally, in January, the company laid off 60 employees from its sales and advertising teams.

These previous job cuts, combined with the most recent layoffs, suggest that TikTok is actively streamlining its workforce, possibly to adjust to its rapid growth and to manage costs more effectively. As the platform continues to expand globally, it faces increasing scrutiny from regulators and lawmakers, particularly concerning data privacy and content moderation. The introduction of more AI-driven solutions may be a part of TikTok’s broader strategy to meet these challenges while keeping costs down.

The Future of Content Moderation on TikTok

TikTok’s shift toward greater reliance on AI in content moderation reflects a larger trend in the social media industry. Platforms are under growing pressure to moderate content more quickly and efficiently to prevent the spread of misinformation, harmful content, and violations of user safety. AI offers a way to handle these tasks at scale, but it is not without challenges.

- Limitations of AI: While AI can be incredibly effective for detecting certain types of content, it has limitations. For example, AI systems can misinterpret the context of posts or fail to understand cultural or linguistic nuances. These limitations mean that human moderators will likely remain a necessary part of content moderation, especially for more complex cases.

- Balancing Speed and Accuracy: The goal for platforms like TikTok is to find the right balance between speed and accuracy in content moderation. AI can quickly flag and remove inappropriate content, but the accuracy of these decisions improves when combined with human judgment. As TikTok continues to develop its moderation strategies, it will need to ensure that its systems remain effective while also protecting free speech and ensuring fairness in content evaluation.

The recent job cuts at TikTok, particularly in Malaysia, signal the company’s ongoing efforts to enhance its global content moderation system by integrating more AI technology. While these changes aim to improve efficiency, they come at the cost of human jobs, particularly in content moderation roles. As AI continues to evolve, the landscape of content moderation will likely shift further, and TikTok, like other platforms, will need to balance technological innovation with the need for human oversight in complex cases.

FOLLOW OUR WHATSAPP CHANNEL FOR MORE UPDATES

SHARE THIS POST